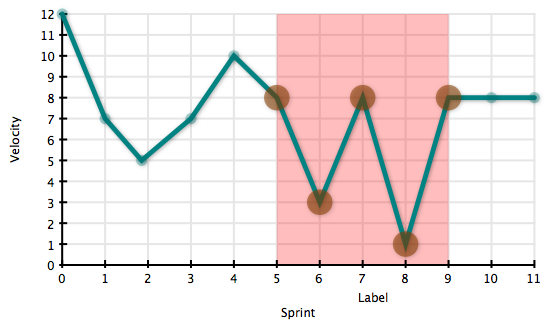

Our development team recently went through a transition period that involved the introduction of a couple of team members. We aggressively track velocity week to week. These numbers not only help in planning releases, but also to gauge the health of the team and process. I generally disregard the first few sprints (sprint = 1 week) for the team to get comfortable with each other and the tools.

I should note here that the team is using a fibonacci scale of estimates and generally has features between 1 and 5 points. This project is also made up of significant legacy code and is being “stabilized”. Bugs come in regularly and don’t get estimated. Big changes to the application need to take in to account existing users and their similarly legacy data. (Legacy here means old and originally developed with minimal QA and tight time constraints)

The first 5 sprints for this team were quite encouraging. After a 3 week bootstrapping period there was a strong sense that the team was building up to a strong pace. The team had a rough sprint 6, but they seemed to bounce back the following week. Sprint 8 had another collapse. By this point we were looking at 4 weeks and only completing 20 points. What is going on? Sprint 4 had us expecting twice that pace.

I’m very fortunate to have a reliable team, a very skilled and experienced team lead, and a patient set of stakeholders. As we began seeing the fluctuating velocities for what they were (a problem with our process) all of us began looking for causes and solutions. I’ve seen this before. The team got about 6 weeks in to the project, everyone was beginning to feel confident that we were doing things right, and then we started to lose control. We’d nail one sprint only to completely miss on the next one. It was frustrating and demoralizing.

We got through it.

The root cause came down to unanticipated externalities. That means that a task would get held up because we needed an icon from the designers, or we needed content for the new email, or our acceptance criteria were vague enough that developers couldn’t quite tell if they were done until QA approved or rejected the work. The team wasn’t quite sure if their work was done and tasks would get rejected at the end of every sprint for often minor issues.

What did we do to fix it?

The biggest change was to add detail to the acceptance criteria and make sure our QA process would verify exactly to the acceptance criteria. This ultimately was my fault and by getting QA to strictly focus on the ACs it put pressure on me to get as much in to the ACs as I could, otherwise I’d need to create a new user story to tune it and that may mess up my timelines. I like to call this approach “strategic pressure points”. The goal is to strategically put positive and negative pressure and side effects to encourage those best practices that we all say we should follow but often lose motivation after a few times.

The other shift in think came more as a side effect of the first. That was to hold on to user stories until I had all of the content and graphics ready to go with it. In an ideal world we’d be able to drop a designer directly on the team and turn the graphics problem from an external issue to an internal one. This gives the team (plus one designer) control over their ability to complete the stories that they accept in to a sprint.

The key to this turnaround is a return to the basics. What do the numbers say? What is going wrong or right; what is causing frustration in the team members? And, what can you do to make things incrementally better? Keeping our eyes on the metrics that we are collecting helped us track the instability of our process and allowed us to focus on specifics when looking for problems. Constantly looking for things that aren’t working perfectly and finding ways to make them slightly more perfect helped us respond to the problems rationally and see rapid recovery from the issues that were affecting us.